🧠 Optical Illusions Explain Why AI Hallucinates

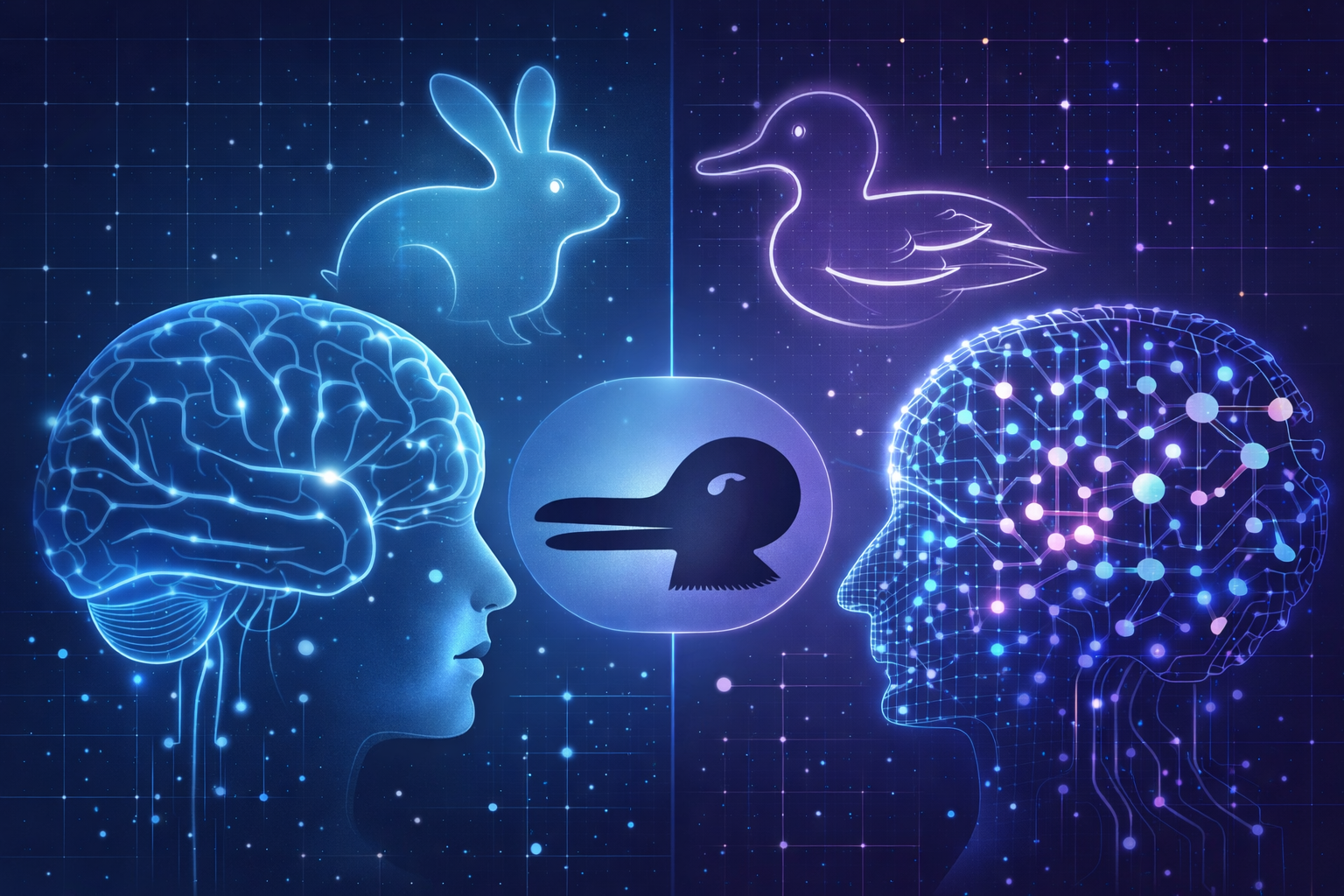

Optical illusions reveal something important about intelligence — both human and artificial.

Humans See Things That Aren’t There

Optical illusions are fascinating because they reveal a weakness in how our brains process information. Consider the classic illusions:

- Two lines that appear different lengths but are identical

- Static images that appear to move

- Shapes that seem three-dimensional but are actually flat

Your brain confidently perceives something that does not exist. Even after you learn the truth, the illusion still works. Why? Because your brain is not directly seeing reality. It is interpreting patterns.

The Brain Is a Prediction Machine

Your brain constantly asks questions like:

- What object is this likely to be?

- What shape fits the pattern I see?

- What interpretation best matches past experience?

AI Models Work the Same Way

Large language models and image recognition systems operate using a similar principle. They do not actually “know” facts in the way humans imagine.Instead they predict:

- What word is most likely next

- What pattern most resembles a known object

- What interpretation best fits the training data

How Image Recognition Can Be Fooled

Researchers have discovered that tiny changes to images can fool AI vision systems. A famous example: A few pixels of noise can cause a neural network to classify:- A turtle as a rifle

- A panda as a gibbon

- A stop sign as a speed limit sign

Hallucinations Are Not Bugs — They’re a Property

A common misconception is that hallucinations are a defect that can be completely eliminated. They cannot. They are a natural consequence of how prediction-based intelligence works. The same pattern inference that allows AI to:- Summarize documents

- Write software

- Reason through problems

What This Means for the Future of AI

Understanding hallucinations through the lens of optical illusions leads to a more realistic expectation. AI will not become perfect truth machines.Instead, the future likely looks like:

- AI systems that check themselves against external data

- Architectures combining probabilistic reasoning with verification

- Humans acting as high-level validators